FEATURED INSIGHTS

-

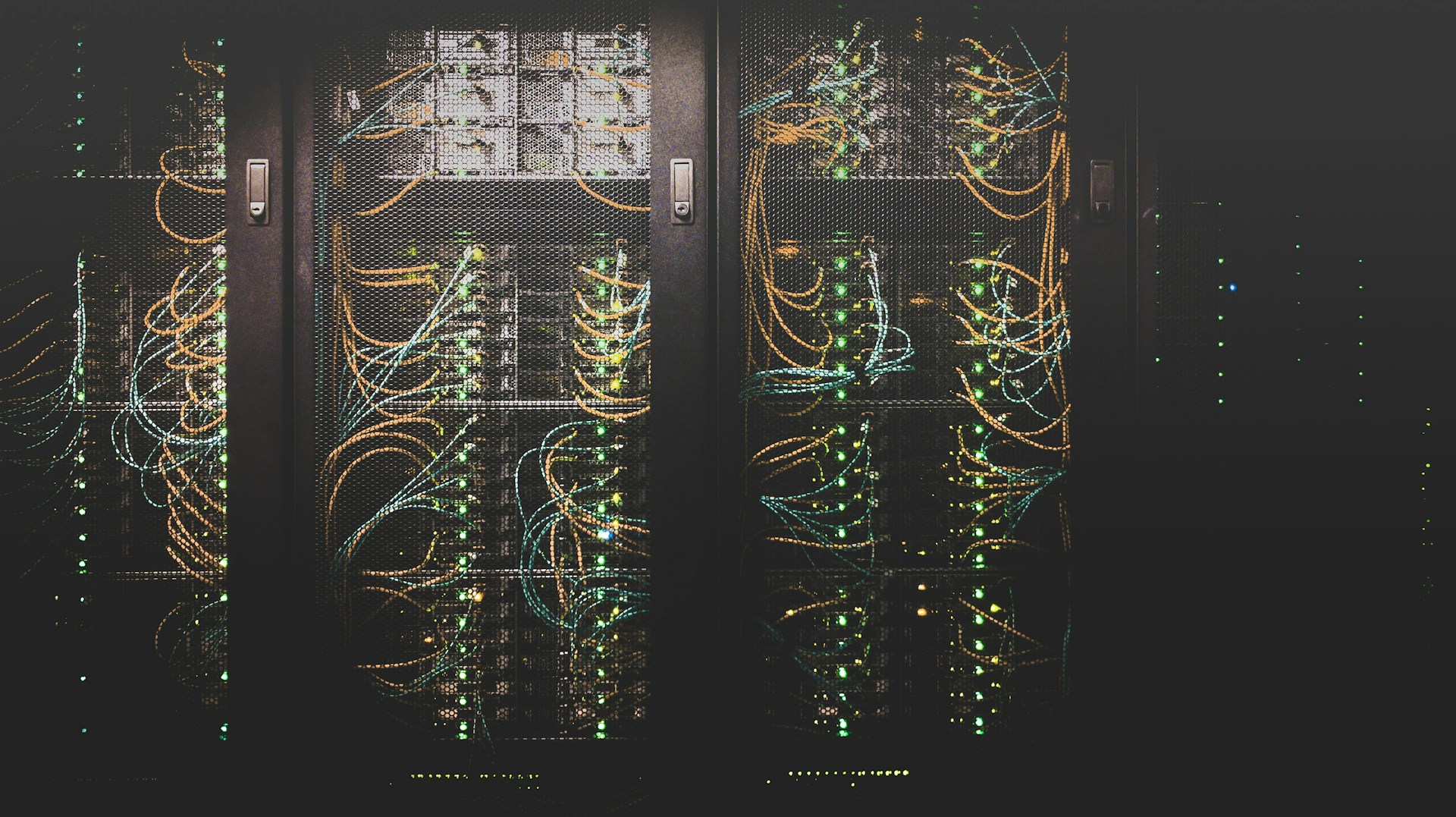

What Exactly is Data Engineering?

Like oil to a car, data fuels your business

In the digital age, data is the new oil. It powers decision-making, innovation, and even the products we use daily. But how does raw, unstructured data transform into actionable insights?

The answer lies in data engineering. While it might not always be in the spotlight, data engineering is the backbone of the modern data ecosystem. Let’s break down what it is and why it matters.

MORE

-

Excel VBA vs. Custom Data Solutions: When to Upgrade to a Dieseinerdata Web-based Analytics Platform

When to Upgrade Your Analytics

Excel has long been the go-to tool for businesses managing data, running reports, and performing basic analytics. It is familiar, flexible, and accessible to employees across different departments. However, as businesses scale, Excel’s limitations become apparent, making the transition to a more robust system necessary. Dieseinerdata has upgraded several clients In this article, Dieseinerdata will explore the drawbacks of relying solely on Excel and identify the right time for businesses to upgrade to a web-based analytics platform.

more

-

A Beginner’s Guide to Key Data Analytics Terms

Key Analytics Terms to Make Informed Decisions

In today’s data-driven world, business professionals must understand key analytics terms to make informed decisions. Whether you’re working with data analysts or just starting your journey in business intelligence, knowing these fundamental concepts will help you communicate effectively and leverage data insights. We here at Dieseinerdata wrote a glossary of essential analytics terms every business professional should know.

more

-

A Guide to the CRISP-DM (Cross-Industry Standard Process for Data Mining) Method

The Key Strength of CRISP-DM is its Flexibility

The CRISP-DM (Cross Industry Standard Process for Data Mining) methodology is a widely used framework for structuring data mining and analytics projects. Developed in the late 1990s, it provides a systematic approach to tackling data-related problems across various industries.

more

-

How to Clean and Prepare Your Data for Better Insights

Clean Data = the Foundation for your Company

In the world of data analytics and business intelligence, clean and well-prepared data is the foundation for accurate insights. Poor data quality leads to misleading conclusions, flawed decision-making, and wasted resources. Before diving into complex analysis or visualization, it’s crucial to ensure your data is free from errors, inconsistencies, and redundancies. In this guide, Dieseinerdata will walk through the essential steps to clean and prepare your data for better insights.

Step 1: Understand Your Data

Before cleaning data, take the time to explore and understand it. This includes:

- Identifying the source of your data (databases, spreadsheets, APIs, etc.).

- Checking for missing or inconsistent values.

- Understanding the format, structure, and expected ranges of data fields.

- Identifying anomalies or outliers.

Performing an initial exploratory data analysis (EDA) will give you a clearer picture of the data’s current state and guide your cleaning process.

Step 2: Handle Missing Data

Missing data is one of the most common issues in datasets. You have several options to handle it, depending on the context:

- Remove Missing Values: If a small portion of data is missing, you can remove those rows or columns without significantly affecting the dataset.

- Impute Missing Values: For numerical data, you can replace missing values with the mean, median, or mode. For categorical data, the most common category can be used.

- Use Predictive Methods: Advanced techniques like regression or machine learning models can predict and fill missing values when appropriate.

Step 3: Standardize Data Formats

Inconsistent data formats can cause errors in analysis. Standardizing formats ensures uniformity across the dataset:

- Convert date formats to a common standard (e.g., YYYY-MM-DD).

- Ensure numerical values use the correct decimal points and units.

- Normalize categorical data by using consistent naming conventions (e.g., “USA” vs. “United States”).

Step 4: Remove Duplicates

Duplicate records can inflate results and distort insights. Identifying and removing duplicates is essential:

- Use tools like SQL queries (

SELECT DISTINCT), Excel functions (Remove Duplicates), or Python’s Pandas (drop_duplicates()). - Check for near-duplicates caused by slight variations in data entry.

Step 5: Detect and Correct Errors

Errors such as typos, incorrect values, and inconsistent entries must be corrected:

- Use data validation rules to detect out-of-range values.

- Cross-check data against reference databases where applicable.

- Utilize automated scripts to flag anomalies for review.

Step 6: Normalize and Transform Data

Data normalization and transformation help make the data suitable for analysis:

- Scaling: Rescale numerical values using techniques like Min-Max normalization or standardization.

- Encoding: Convert categorical data into numerical format for machine learning applications (e.g., one-hot encoding).

- Parsing: Break down complex fields (e.g., “Full Name” into “First Name” and “Last Name”).

Step 7: Validate and Document the Cleaning Process

After cleaning, validate the results to ensure data integrity:

- Perform spot checks and summary statistics to confirm expected distributions.

- Compare cleaned data with raw data to ensure no loss of crucial information.

- Document the cleaning steps for reproducibility and future reference.

Step 8: Automate Data Cleaning for Future Use

Manually cleaning data is time-consuming. Automating the process improves efficiency and consistency:

- Use data pipelines with automated validation and cleaning steps.

- Leverage scripting languages like Python (Pandas, NumPy) or tools like Alteryx and Talend.

- Schedule regular data quality checks and cleaning routines.

Conclusion: Better Data, Better Decisions

Clean and well-prepared data leads to more accurate and actionable insights, empowering organizations to make data-driven decisions with confidence. Following these steps ensures data reliability, minimizes errors, and enhances analytical outcomes.

If your organization struggles with data quality or needs expert guidance in data cleaning and preparation, DieseinerData can help. Our team specializes in building company data reporting platforms and web applications, transforming raw, messy data into high-quality, actionable intelligence. Contact us today to ensure your data works for you, not against you!

-

AI and Automation in Data Analytics: What’s Hype and What’s Real?

Examining where AI truly adds value and where expectations need to be tempered.

In the rapidly evolving world of data analytics, artificial intelligence (AI) and automation have become buzzwords that dominate discussions. Companies across industries are investing heavily in AI-driven analytics, expecting transformative outcomes. However, not all promises of AI and automation in analytics hold up under scrutiny. While some applications genuinely revolutionize decision-making and efficiency, others are overhyped and fail to deliver tangible results.

more

-

What Exactly is a Data Pipeline?

What Exactly Is a Data Pipeline?

In today’s data-driven world, organizations rely on data to make informed decisions, drive innovation, and stay competitive. Raw data is often messy, scattered across various sources, and not immediately usable. This is where data pipelines come into play. But what exactly is a data pipeline? Let’s break it down.

Definition of a Data Pipeline

A data pipeline is a series of processes that automate the movement and transformation of data from one system to another. Think of it as a pathway that raw data travels through to become valuable insights. The pipeline’s primary goal is to ensure data is collected, processed, and delivered reliably and efficiently.

A data pipeline typically involves three main stages:

- Ingestion: Capturing raw data from various sources such as databases, APIs, sensors, or user inputs.

- Processing: Cleaning, transforming, and enriching the data to make it usable. This may involve filtering, aggregating, or even applying machine learning models.

- Storage and Output: Delivering the processed data to a destination like a database, data warehouse, or visualization tool for analysis.

-

5 Data Analytics Methods to Understand Your Customers Better

Use data analytics to better understand customers by segmenting them into actionable groups, predicting their behaviors, analyzing their sentiments, mapping their journey across touchpoints, and personalizing experiences based on their preferences and history.

1. Customer Segmentation

How: Use clustering algorithms or other segmentation techniques to group customers based on demographics, purchasing behavior, or preferences.

Why: Understand distinct customer groups to tailor marketing campaigns and product offerings.

Example: Segmenting customers into “frequent buyers,” “seasonal shoppers,” and “one-time buyers” to create targeted promotions.

MORE

-

Understanding Granularity in Data Analytics & Business Reporting: Why It Matters and How to Get It Right?

Granularity is one of the cornerstone concepts in data analytics and business reporting, influencing how data is structured, analyzed, and interpreted. But what does granularity mean, and why is it so crucial?

Let’s dive into the details and explore how understanding granularity can lead to more accurate and insightful data analysis.

MORE

Recent Posts

-

What Exactly is Data Engineering?

-

Excel VBA vs. Custom Data Solutions: When to Upgrade to a Dieseinerdata Web-based Analytics Platform

-

A Beginner’s Guide to Key Data Analytics Terms

-

A Guide to the CRISP-DM (Cross-Industry Standard Process for Data Mining) Method

-

How to Clean and Prepare Your Data for Better Insights

-

AI and Automation in Data Analytics: What’s Hype and What’s Real?

-

An Introductory Guide to Data Visualizations

-

The Best Data Visualization Techniques for Clearer Insights

-

How Data Analytics Helped a Local Business Scale Nationally

-

The ROI of Good Data: How Clean Data Boosts Profits

Categories

- Blog (19)

- Case Study (2)